Every mobile team knows the feeling.

Tests aren’t inherently slow. A functional test takes seconds to execute. Even a comprehensive regression suite should complete in minutes.

Yet device testing takes hours.

The culprit isn’t your test code. It’s your infrastructure.

Last week, we explored the three gaps between testing and reality: the emulator gap, the coverage gap, and the visibility gap. This week, we go deep on Speed – the foundation that closes all three.

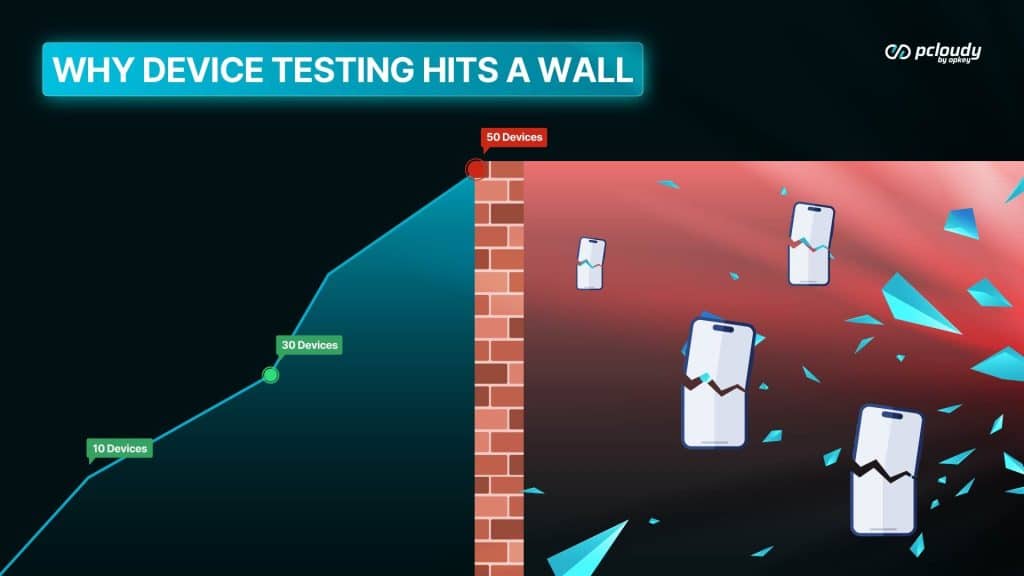

Every growing team hits it.

At 10 devices, everything works.

You know where each device is. Someone charges them when they’re low. Updates happen when someone remembers. Need a specific device? Walk over and grab it.

At 30 devices, cracks appear.

Devices start going missing. OS versions drift – some running current versions, some two years out of date. Someone becomes the unofficial “device manager,” spending hours each week tracking, charging, updating. Coordination happens over Slack: “Who has the Pixel?”

At 50+ devices, it breaks completely.

Full-time management becomes necessary. Devices are never available. Your “device lab” becomes a source of friction rather than capability. The management overhead scales faster than the testing benefit.

The wall exists because device testing doesn’t scale linearly.

More devices should mean more coverage. Instead, more devices means more management. More management means more friction. More friction means slower testing.

This is why most teams stay stuck at 10-20 devices. Not because they don’t want coverage – they understand that 10 devices don’t represent their user base. But scaling device infrastructure is genuinely hard.

The wrong question is: “How do we manage more devices?” That question leads to more process, more overhead, more friction. The right question: “How do we get coverage without the management burden?”

That’s what Speed solves.

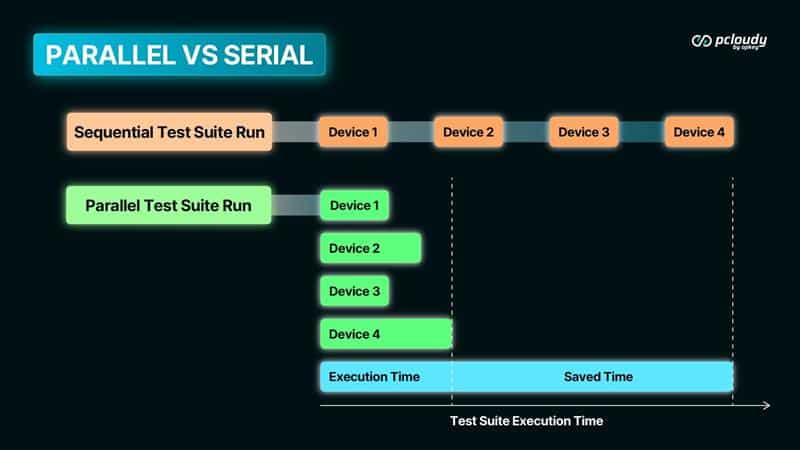

The Power of Parallel Testing

Traditional device testing is serial. One device at a time.

Run test on Device 1. Wait for completion. Run test on Device 2. Wait for completion. Run test on Device 3. Wait for completion. Repeat.

The math is brutal:

Serial execution:

- 50 devices

- 5 minutes per device

- Total time: 250 minutes (4+ hours)

Now imagine running on all 50 devices simultaneously.

Parallel execution:

- 50 devices

- 5 minutes per device

- Total time: 5~6 minutes

Same coverage. Same tests. 98% less time.

This isn’t theoretical optimization. This is what changes when infrastructure supports parallel execution.

The old trade-off is dead.

For years, teams faced a painful choice: “Test on more devices OR ship faster. Pick one.”

This trade-off forced difficult decisions. Skip device coverage to meet deadlines. Or delay releases to test properly. Neither option is good.

Parallel execution eliminates the trade-off. You get comprehensive coverage AND speed. They’re no longer in conflict.

What parallel testing unlocks:

Test across Samsung Galaxy (budget to flagship). Test across the Pixel range. Test on Xiaomi, OnePlus, Oppo. Test on devices with 3GB RAM and devices with 12GB. Test on Android 11, 12, 13, 14.

All at once. In minutes.

Run comprehensive device testing on every Production Release – not just before major releases. Catch device-specific bugs before they reach production. Get feedback while the code is still fresh in your head.

The constraint was never “we don’t want more coverage.” The constraint was time.

Parallel execution removes the time constraint.

Test What Your Users Actually Have

There’s a gap between most device labs and actual user bases.

The typical device lab:

- 2 iPhones (latest and one generation back)

- 3-4 Samsung Galaxy (usually flagships)

- 1-2 Pixels

- Maybe a OnePlus or Xiaomi

- A few “older devices” someone brought from home

The typical user base (varies by market, but often):

- 40% Samsung (including budget models like A-series)

- 15% Xiaomi/Redmi

- 12% iPhone (multiple generations)

- 8% Oppo/Vivo

- 7% Realme

- 5% OnePlus

- 13% Everything else

The mismatch is where bugs hide.

Your tests pass on the Galaxy S24. But your users on the Galaxy A14 (with 3GB RAM and aggressive battery optimization) hit crashes.

Your tests pass on the iPhone 15. But your users on iPhone 11 (still on iOS 15) see layout issues.

Your tests pass on Pixel 8. But your users on Xiaomi with MIUI see your notifications don’t work correctly.

Strategic coverage means testing what your users actually have.

Not just flagships. Not just the devices your team personally owns. Not just whatever’s in the drawer.

The framework for strategic coverage:

- Know your user distribution. What devices are actually in your user base? Your analytics can tell you.

- Match test coverage to user reality. If 15% of users are on Xiaomi, Xiaomi should be roughly 15% of your test coverage.

- Include the long tail. The top 10 devices might cover 40% of users. The next 40 devices cover another 40%. Don’t ignore the long tail.

- Test the constraints, not just the flagships. Low RAM. Older OS versions. Slow networks. Battery saver mode. That’s where bugs surface.

Parallel execution makes strategic coverage practical. When you can test 50-100 devices in minutes, you’re not forced to choose between coverage and speed. You can test what your users actually have – on every PR.

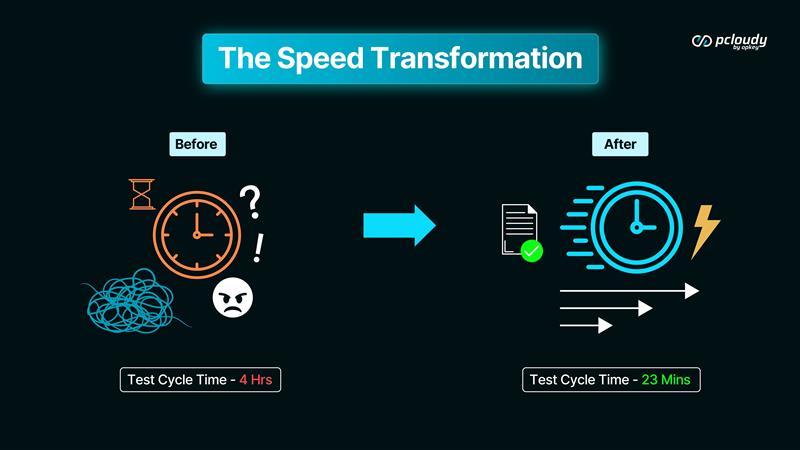

Customer Story: The Speed Transformation

A fintech team came to us with a familiar situation.

45 engineers. Mobile-first product. Growing fast.

Their device testing setup:

- 18 local devices in a shared drawer

- 6 QA engineers coordinating via Slack

- “Who has the iPhone?” was a daily message

- Someone spent 5+ hours/week on device management

Their test cycle time: 4 hours.

Not because the tests were slow. But because the infrastructure was friction.

Serial execution – one device at a time. Context switching while waiting for results. Limited coverage because time didn’t allow more.

The hidden costs they weren’t measuring:

- Developers frustrated waiting for test results

- Features delayed by QA bottleneck

- Bugs slipping through on untested device types

- A QA team that felt like blockers instead of enablers

After moving to Device Cloud:

Test cycle time: 23 minutes.

Same tests. Better coverage – they went from 18 devices to 50+ device configurations.

- Parallel execution across devices (vs. serial)

- Zero device management overhead (vs. 5+ hours/week)

- Coverage matching their actual user base (vs. “whatever’s in the drawer”)

What changed beyond the numbers:

The QA team stopped being the bottleneck. Results arrived while developers still had context. Testing happened on every PR, not just before releases.

Their deployment frequency tripled. Not because someone mandated it – because testing stopped being the constraint.

The QA lead told us: “We went from testing being the thing everyone waited on to testing being the thing that enabled everyone to move faster. Same team. Same skills. Different infrastructure.”

Speed Is the Foundation

Here’s the principle we keep coming back to: Speed isn’t a feature. It’s the foundation everything else builds on.

When devices are available without friction:

- Testing happens more often

- Feedback loops tighten

- Coverage expands to match real users

- Confidence compounds

Device Cloud is Speed made infrastructure. For most teams, that’s the answer. Access the devices you need. Run tests in parallel. Get coverage that matches your users. Without managing any of it.

But some teams can’t use public cloud.

Banks with data residency requirements. Healthcare companies with patient data. Government agencies with security mandates. Defense contractors with air-gapped requirements.

Their data can’t leave their environment. Their compliance teams vetoed shared infrastructure. Their security requirements are non-negotiable.

Does that mean they’re stuck without Speed? Does that mean they accept 4-hour test cycles as the cost of compliance?

No.

Speed shouldn’t require security trade-offs.

Next week: Speed Without Compromise.

- Private Cloud – real devices in your secure environment.

- Lab in a Box – real devices on your premises, under your control.

Same Speed foundation. Same parallel execution. Same coverage possibilities.

The deployment model fits your requirements.

The gap between testing and reality closes the same way.

Questions to Reflect

What’s your current device testing cycle time? What would change if you could cut it by 80%?