Most app teams have one product to test. One codebase, one device lab, one release pipeline.

One of India’s biggest telecom players had 15.

Fifteen consumer apps – music, movies, television, chat, and more. Each with over 100 million Android downloads. Each managed by a separate team. Each with its own device lab, its own testing tools, its own process. Teams distributed across the country, operating independently, accountable for their own app’s quality but with no visibility into each other’s infrastructure or coverage.

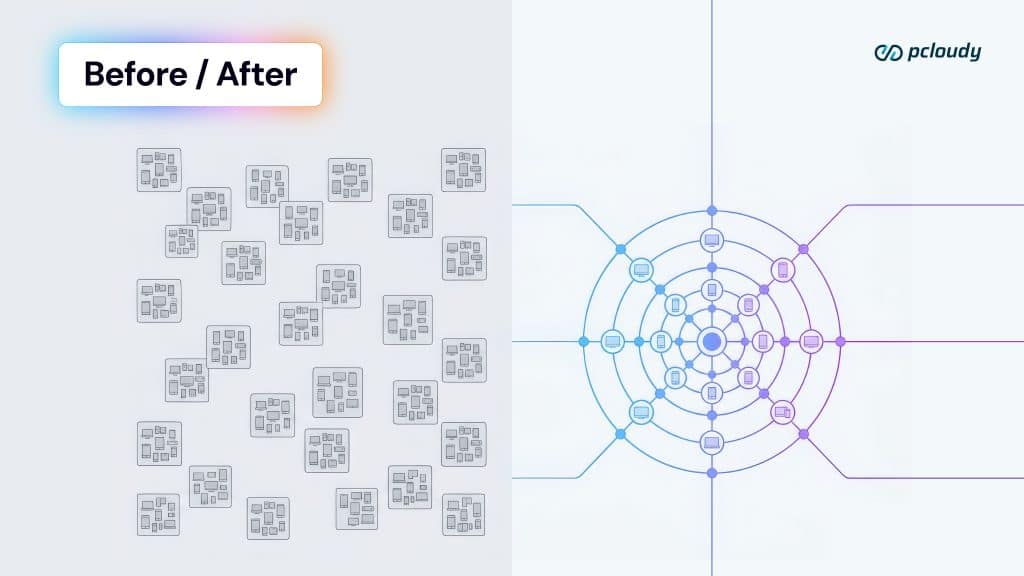

The Result: hundreds of physical devices managed manually across multiple siloed teams. Not hundreds of devices in a unified, well-managed infrastructure. Hundreds of devices spread across labs that couldn’t see each other with the fragmentation costs that model inevitably produces.

Devices sat idle in one team’s lab while another team queued for access. Duplicate tooling investment accumulated across teams. Engineering time that should have been spent on testing was spent on device coordination, allocation tracking, and lab administration. And underneath all of it: a fast sprint release cycle that needed testing velocity the fragmented model structurally couldn’t deliver.

Test on real devices. Ship with confidence.

The problem wasn’t a shortage of devices. It was a shortage of infrastructure that connected them.

Five Problems That Compound Into One

Fragmented infrastructure produces problems that interact. Each one is manageable in isolation. Together, they define the ceiling the team is working under.

- Manual device management at scale. Managing hundreds of physical devices across distributed teams isn’t a testing problem – it’s a logistics operation. Procurement, maintenance, charging cycles, OS updates, physical allocation. Every hour spent on this is an hour not spent on testing.

- Underutilization without visibility. When each team manages its own device set, no team can see that another team’s devices are sitting idle. The same device model gets purchased three times across three teams because no one knows the other two have it. The asset is present. The asset is invisible.

- Automation blocked by infrastructure. Automation at scale requires shared infrastructure – a platform that all teams can connect to, that can schedule runs, manage queues, and execute across multiple devices in parallel. On a fragmented model where each team has its own isolated tooling, automation hits the ceiling of each team’s individual lab. Scheduled CI runs, regression automation across the app portfolio, large-scale load testing; none of these are achievable on infrastructure that can’t be shared.

- Sprint cadence outpacing manual testing. The telecom operator’s release cycle was fast and frequent – offers, features, promotions, all needing testing before they go live. Manual testing, on fragmented infrastructure, against the breadth of Android devices their users carried, could not keep pace. The testing bottleneck was setting the release timeline.

- SIM testing: the problem nobody solves cleanly. This is the one that’s specific to telecom and it’s the one that most distributed teams quietly accept as unsolvable. More on this below.

The SIM Testing Problem

For a consumer telecom app, SIM-dependent scenarios are not edge cases. They are core product flows.

Network registration when a user joins a new area. Call initiation and termination. SMS sending and delivery. Mobile data session management. Carrier-specific feature interactions. Roaming behavior at coverage boundaries. These are the scenarios that define whether the app works – not in a testing environment, but for a real user on a real network doing the things a telecom subscriber does every day.

All of these scenarios require a real SIM card in a real device on a real network.

And here is the problem that distributed teams face: SIM cards live in physical devices. Physical devices live in labs. Labs are in specific locations. A QA engineer sitting in one city cannot access a SIM card in a lab in another city. Which means distributed teams either don’t test SIM-dependent scenarios, or they travel to the lab, or they duplicate SIM setups across every regional office, compounding the already-fragmented device estate.

Test on real devices. Ship with confidence.

None of these are acceptable answers for a team with a sprint release cadence and a user base that expects the app to behave accurately on their network, in their location, on their device.

| Testing approach | SIM coverage | What gets missed |

|---|---|---|

| Emulators | None — no SIM hardware | All network-dependent flows: registration, calls, SMS, data sessions, roaming |

| Simulated network conditions | Partial — bandwidth and latency parameters | Real carrier behavior, SIM toolkit interactions, roaming edge cases |

| Physical lab — no remote access | Real, but only for collocated teams | All distributed teams test without SIM. National coverage gaps accepted silently. |

| On-premises cloud with central SIM lab | Real SIM access for all teams, from any location, 24/7 | — (this closes the gap) |

The SIM testing gap is the clearest single illustration of what fragmented infrastructure actually costs. It’s not just slow testing – it’s a category of testing that the infrastructure structurally prevents. The distributed team isn’t failing to test SIM scenarios because they don’t know they should. They’re not testing them because the infrastructure makes it physically impossible.

When infrastructure prevents testing rather than enabling it, the coverage gap is invisible in the test results but visible in production.

The Solution: Unification, Not Expansion

The instinct when faced with a device shortage is to add more devices. More labs. More hardware. The instinct when faced with a coordination problem is to add more process – scheduling systems, allocation tools, and shared spreadsheets.

Neither instinct addresses the actual problem.

The telecom operator chose a different path. One constraint shaped everything: they did not want external hosted services. Their devices, their data, their infrastructure – on their premises. This ruled out public cloud testing platforms and pointed directly to on-premises cloud: Pcloudy’s Lab in a Box deployment, set up physically inside the organization’s own environment.

The implementation was built around three decisions that defined the outcome:

Consolidation over expansion.

Rather than continuing to grow the fragmented device estate, the hundreds of manually managed devices across siloed teams were replaced by a unified 200-device on-premises cloud, scalable to 500+ as needs grew. Multiple devices, centrally managed, accessible to every team, produced more effective coverage than hundreds of devices managed in isolation. The asset utilization alone; devices available 24/7 to all teams rather than sitting idle in individual labs – justified the consolidation.

Centralized SIM lab with remote access for all teams.

SIM cards were brought into the central lab and made accessible via the cloud platform from any location across the country. For the first time, a QA engineer in any city could run SIM-dependent test scenarios against real devices with real SIM cards without travelling, without requesting lab access, without waiting. The SIM testing gap – which had previously been accepted as an unavoidable constraint of distributed teams was resolved at the infrastructure level.

Integration with existing automation frameworks.

Each team had built automation investments on its own tooling. Forcing a migration to new frameworks would have been expensive, disruptive, and complicated across 15 app teams with different tooling histories. Pcloudy’s platform was chosen because of its ability to integrate with the custom automation frameworks already in use. The transition preserved what teams had built while giving those automation investments the shared infrastructure they needed to actually scale.

What Changed, And What Became Possible?

| Dimension | Before | After |

|---|---|---|

| Device management | Hundreds of devices managed manually — siloed across app teams | 200-device on-premise cloud, scalable to 500+ |

| Team access model | Each app team: separate lab, separate devices, separate tools | Unified platform — all teams, all apps, one infrastructure |

| SIM access | Centralized lab — teams required physical presence to test | SIM in central lab, accessible remotely by all teams nationwide |

| Automation | Manual-dominant — automation couldn’t scale on fragmented infra | Scheduled automated CI runs, regression automation at scale |

| Device utilization | Low — idle devices in some teams, access queues in others | Optimized 24/7 — no idle hardware, no access bottlenecks |

| Parallel / load testing | Not possible at required scale | Large-scale simultaneous load testing on real devices |

| Release environment | Manual-bottlenecked sprint cycle | DevOps-aligned — release time reduced significantly through automation |

| Security posture | Internal, per-team — custom and inconsistent | On-premises cloud — stringent security requirements met, custom frameworks integrated |

The operational outcomes were significant: devices were optimized 24/7 across all teams, significant time savings in manual and automation testing, scheduled automated runs in continuous integration mode, regression automation operational at scale, SIM access for all teams nationwide, large-scale simultaneous load testing on real devices, and a move toward a DevOps-aligned release environment with release time reduced significantly through automation.

But the outcome that matters more than any individual metric is the category of testing that unified infrastructure made possible for the first time.

Large-scale load testing across real devices – not possible on fragmented infrastructure – became routine. Scheduled CI automation across the full app portfolio; not achievable when each team operated in isolation – became the standard release process. SIM-dependent scenario testing for every distributed team member, structurally prevented by the previous model; became a norm in the testing cycle.

These weren’t improvements to existing capabilities. They were capabilities that hadn’t existed. The infrastructure had been blocking them, not through policy, but through architecture.

Three Takeaways That Travel Beyond Telecom

The telecom operator’s situation is specific – 15 consumer apps, sprint cadence, distributed teams, SIM-dependent testing. But the lessons the case produces apply to any organization managing a multi-team testing infrastructure.

- Fragmentation is the cost that compounds invisibly. Every siloed lab carries overhead: device procurement, maintenance, allocation coordination, duplicate tooling investment. Most organizations see the individual cost and miss the aggregate. When you add underutilized hardware, untestable SIM scenarios, blocked automation, and a bottlenecked release cycle, the true cost of fragmented infrastructure is significantly larger than any line item on a device procurement budget.

- The SIM testing gap is accepted more often than it is solved. For any organization with SIM-dependent testing requirements and distributed teams, the gap between ‘scenarios we should test’ and ‘scenarios we can test’ is often quietly accepted as a structural constraint. It isn’t. Centralized SIM infrastructure with remote access resolves it at the architecture level. Teams that close this gap don’t just test more — they test the scenarios that are most likely to surface failures in production.

- Consolidation produces more effective coverage than expansion. The counterintuitive lesson from this case: fewer centrally managed devices covering more teams produced better outcomes than hundreds of fragmented devices covering siloed teams. Consolidation improves asset utilization, enables shared automation infrastructure, unlocks parallel and load testing, and eliminates the coordination overhead that fragmented models generate. When the device estate is right-sized and unified, the coverage it produces is more reliable — not less.

The underlying principle is simple but consistently underestimated: testing infrastructure that teams cannot fully access is not testing infrastructure they actually have. The devices exist. The capability doesn’t, because the architecture prevents teams from reaching it.

Scale is not solved by more devices. It is solved by unified infrastructure that every team can reach.

Is your organization’s testing infrastructure fragmented across teams — with the coordination overhead, coverage gaps, and blocked automation that fragmentation produces? Talk to our team about on-premises unified device cloud and what consolidation looks like for your app portfolio.